DOWNTIME SOLUTIONING

Identifying System Failures and Restoring Confidence in Clinical Operations

Overview

A critical clinical system experienced repeated downtime incidents that disrupted workflows across multiple departments. Each outage forced clinicians into manual fallback processes, slowed patient throughput, and eroded trust in the system’s reliability.

I led a cross‑functional root cause analysis (RCA) to identify failure points, quantify operational impact, and drive a set of corrective actions that strengthened system stability and improved clinical readiness for future incidents.

The Problem

Downtime incidents were becoming more frequent and more disruptive. Clinicians reported:

Lost or delayed access to essential systems

Confusion about when to switch to downtime procedures

Documentation backlogs during system restoration

Communication gaps between technical teams and frontline staff

Initial investigations focused on individual outages, but patterns suggested deeper systemic issues. Leadership needed a clear understanding of why the failures were occurring and what must change to prevent them.

Client

HCA

Service

DOWNTIME ROOT CAUSE ANALYSIS

Industry

Hospitals

Year

2023

Process

I partnered with IT infrastructure, application support, clinical operations, and governance teams to reconstruct the timeline and impact of each incident. The analysis included:

Logs and system performance traces

Timeline reconstruction across departments

Failure mode categorization

Workflow observation during active downtimes

Interviews with clinical staff impacted by the outages

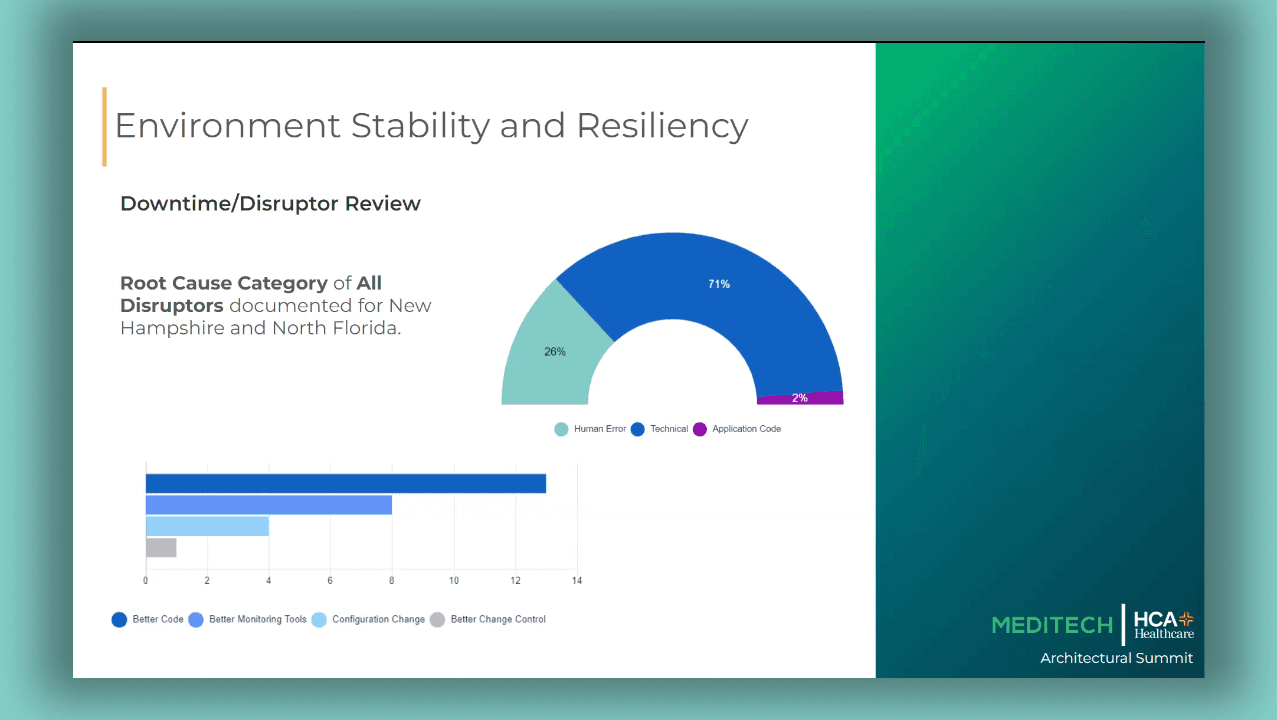

Through this work, several root causes emerged:

A single failure point within the storage environment

Monitoring gaps that delayed detection

Unclear escalation pathways during incident response

Inconsistent downtime readiness across clinical teams

These findings formed the basis for targeted operational and technical reforms.

The Solution

We implemented a set of corrective actions designed to improve uptime, strengthen early detection, and reduce operational disruption:

Infrastructure remediation to eliminate the single point of failure

Enhanced monitoring and alerting to detect performance degradation earlier

Standardized escalation procedures to improve response speed and coordination

Refined downtime workflows so departments could transition smoothly during an outage

Training and communication updates to increase clarity across clinical and technical teams

By addressing both the technical and human factors behind the outages, we created a more resilient ecosystem.

Impact

The improvements delivered measurable gains in reliability and operational readiness:

Reduction in unplanned downtime after infrastructure fixes

Faster incident detection through refined monitoring

Higher clinician confidence in both the system and downtime protocols

Streamlined recovery processes that reduced backlog after each incident

These changes improved continuity of care and reduced the operational burden on clinical teams.

What I Learned

Effective root cause analysis requires looking beyond the immediate failure. The most important lesson:

Stability comes from strengthening both the technology and the workflows that surround it.

By pairing technical fixes with clear communication, better training, and consistent escalation pathways, organizations can reduce the operational cost of outages and restore trust in their systems.